|

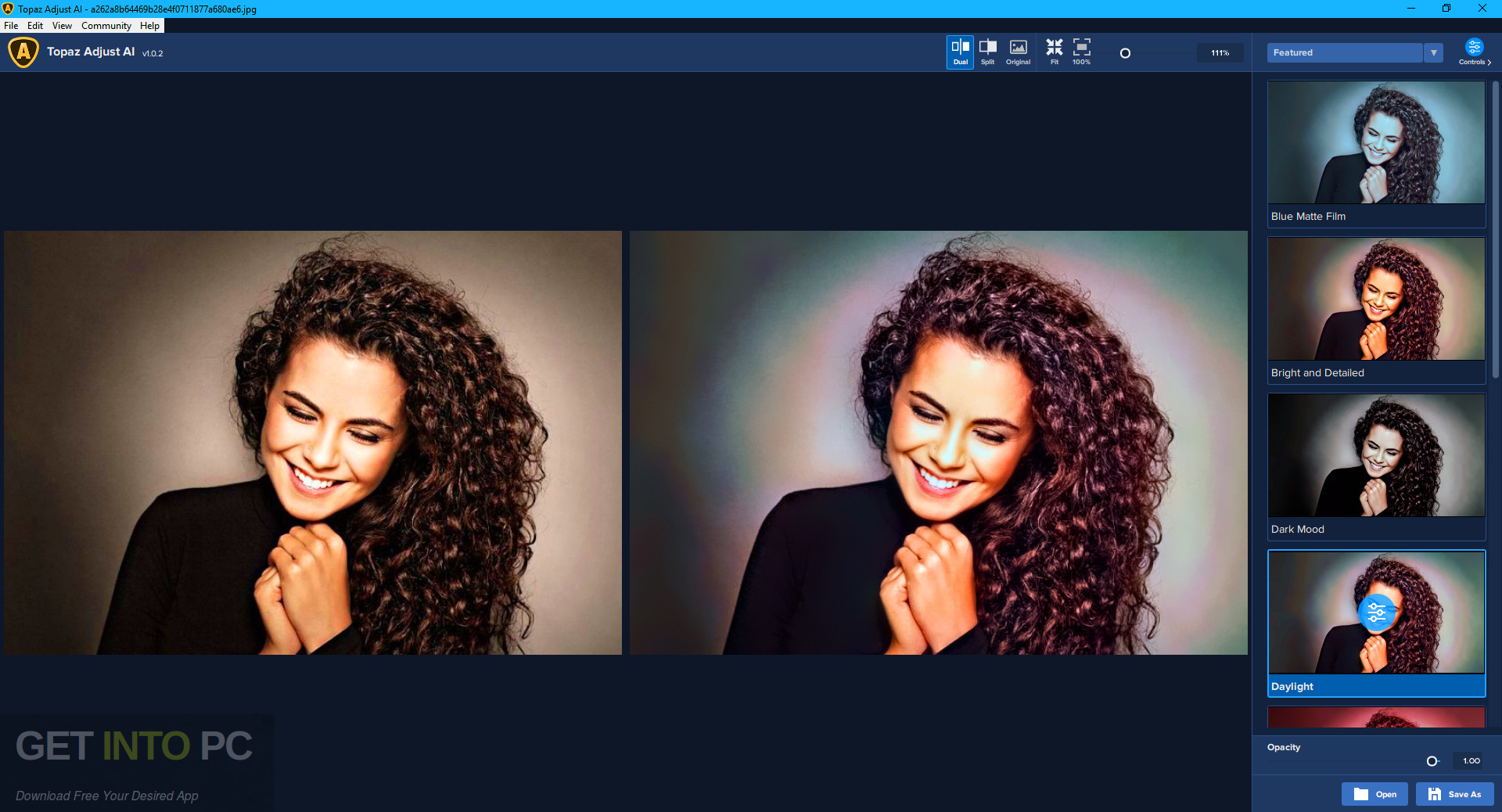

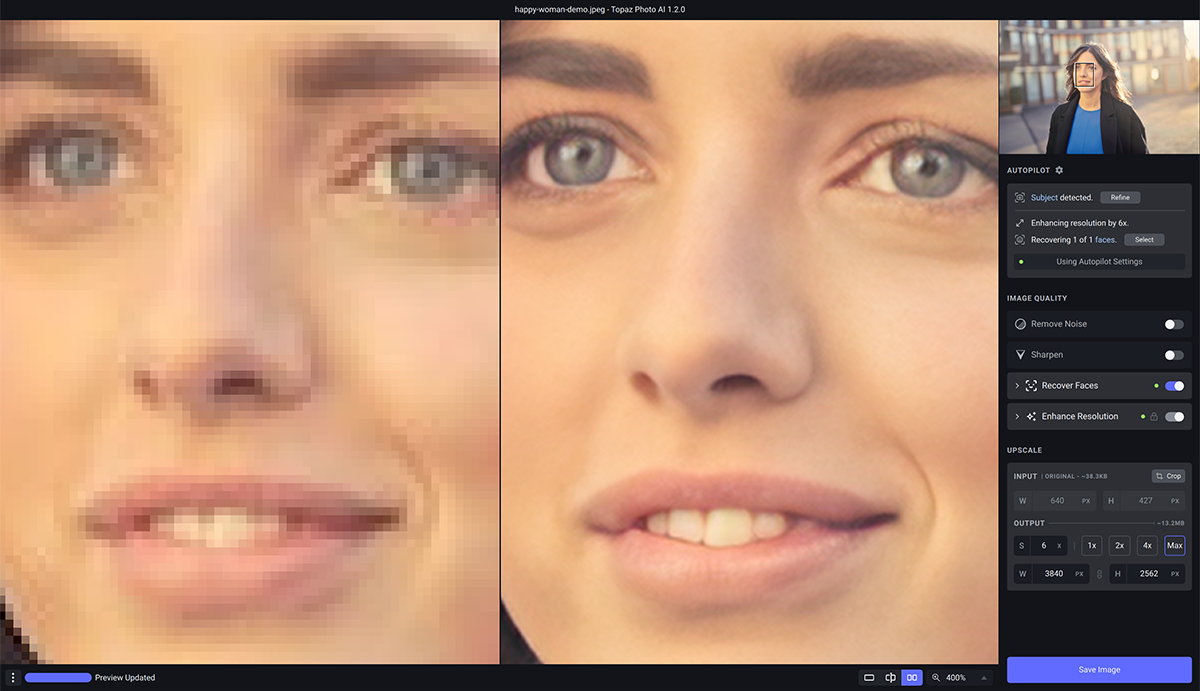

For example, the top end M1 Ultra has 64 Cores with 1024 Execution Units or 8192 ALUs. In Apple Silicon, at least for the M1 series, each core is split into 16 Execution Units, each with 8 Arithmetic Logic Units (ALUs). The data is funneled to streaming multiprocessors, which in Apple's vernacular are referred to as cores. The paper really should be updated to remove mention of the Apple Neural Engine or add a note that until Apple fixes the Neural Engine bugs that Adobe cannot use it.įor those who do not know about the Apple Silicon GPU and the similar Nvidia Cuda cores and Tensor cores which are similar to the Neural Engine then see this:Įver wonder why Apple lists their GPU Cores like 64 and 76, but an Nvidia RTX 4080 has 8,704 CUDA cores?Īt the highest level is the Graphics Processing Unit, aka the GPU which is a parallel processor, which is optimized for multiple instructions to be executed in parallel, opposed to traditional CPUs being optimized for sequential processing. Using these technologies enables our models to run faster on modern hardware. He wrote this, but soon afterwards Adobe said they could not use the Neural Engine until Apple fixed new bugs in Ventura (DxO also wrote about their troubles with it in Ventura, but no problem in Monterey):įinally, we built our machine learning models to take full advantage of the latest platform technologies, including NVIDIA’s TensorCores and the Apple Neural Engine. It seems to me that Eric Chan really should update his Denoise AI paper that he published on 8: New emojis for MacOS are much higher priority, I suppose. I just saw a report from someone who tried LrC 13.0 Denoise AI on an Apple Silicon Mac with Sonoma and the times were the same as they have been since the beginning of this Apple/Adobe/DxO debacle so it seems likely that the Neural Engine is still not being used.Īpple and Adobe have been very quiet about this for months so I think the message that is being sent is that Apple has no plans to ever fix these bugs. Avoid displaying the image at 100% during preview, otherwise risk long preprocessing times, too.Unfortunately, it seems likely the news is bad. Processing time can be long, depending on the parameters selected. The results were consistently more than just good – the results are remarkable in that experienced users are able to recognize the ways in which Sharpen AI is superior to traditional unsharp masks and filters. You can choose from four views: Single, Split View (with slider), Side-by-Side or Comparison (4 up). Operation is simple and the interface is extremely easy to use. We used Topaz Sharpen AI Version 4 as a Photoshop and Lightroom plug-in. Topaz Labs recommends this option should be used instead of traditional sharpening on finely detailed features like eyes, feathers, leaves, and stars.

The Too Soft sharpening model works best to give already-good images a little extra sharpness without diminishing other elements of high quality. It can’t work miracles on images that are just fuzzy blobs, but it can save many images that are otherwise unpleasant. The Out of Focus sharpening model reduces lens blur caused by missed focus.

Note that conventional Image Stabilization combats the negative effects of camera shake, but IS cannot correct for subject movement. Motion Blur sharpening model reduces the softness caused by camera or subject movement.

Even if you are a superhero who never shoots out-of-focus images, we’re sure that occasionally the subject move, as shown here.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed